Kiwicon 9 Day 1 Morning

So another year, and I'm back for another Kiwicon. If I have any misgivings about Kiwicon, it's that I didn't start going earlier in its existence. How, one might ask, will they top the previous two years: a metal band opening Kiwicon 7, and a Delorean on stage for Kiwicon 8?

(A pre-emptive apology if you're reading these notes; they're not as complete as I'd like due to technical failures in my note-taking apparatus, so there's less detail and specifics than I'd like.)

HOT LZ: Highway to the Cyberzone - Metlstorm

Metlstorm provides his own version of The Treasurer's Report to the assembled crowd, complete with dreadful clipart-driven PowerPoint. I imagine first-timers beginning to wonder where, exactly, the conference all their mates recommended is. Stuttering through a masterful rendition of the skit, Metl eventually introduces...

Redacted Right Now - Rear Admiral REDACTED

...Amon Ra, two-times winner of the "Most Likely to Be Arrested After the Conference" award. Amon promises to exceed even his own high standards set in previous years by teasing us with the possibility that bugs in Wireshark can be exploited, via the mechanism of sending carefully-crafted payloads into the xKeyscore system, to analyse the NSA.

And what a tease. As he works through his po-faced presentation the sound of helicopters draw closer... closer... closer. Culminating in Amon being lit up by laser sites, commandos storming the stage, and Amon being headbagged and dragged off.

On the one hand, it's well done. On the other hand, it's hilarious. On the third hand... this actually happens to people. It's... uncomfortable humour, for me at least. I hope it was intended that way.

Metl returns in his full conf regalia of camo, a military helmet decorated with wifi antennae, and a glorious pair of ammo belts made entirely of LEDs rippling over Metl's torso. Which brings us to the first talk proper...

The Internet of Garbage Things - Matthew "mjg59" Garrett & Paul McMillan

Matthew gives us a run-down on the basic idea of the Internet of Things: you have a commodity item which does a simple job very well, such as a light switch, that you can sell for $20. You add an 802.11 module and now you can sell it for $200. Which is a big win when you consider that the module only costs a few dollars from your OEM. It's less of a win when you consider that software is awful, so adding software to something that works is unlikely to improve it.

Matthew then goes through the basics of what he finds: most IoT devices run Linux ("sorry, BSD people, we didn't find any running BSD") or an embedded OS. This is relevant to his interests in two ways; the first is GPL enforcement, because he wants to know who has been running his software without providing source, and the second is to understand how terrible the security is. The answer to the first question is probably depressingly awful (as it tends to be in the embedded device space), but that's not why he's here, so Matthew details the hilarity of what he finds:

- A UDP listener that waits for packets with a magic string containing the word "Backdoor" and then executes the payload as root.

- All sorts of horrid debug servers that allow for remote root code execution with only a little more effort than the above.

- Service discovery is all based off libupnp which tends to be riddled with vulnerabilities in shipping devices.

- Calls out to remote servers which don't use SSL, or if they do, don't bother doing cert checks, so are trivial to intercept.

- Scheduler APIs which effectively allow you to remotely insert entries into root's crontab. No points for guessing what this gives you.

And so on and so forth. Matthew goes on to detail the tooling he uses to discover this: nmap and binwalk are the main items. binwalk lets you unpack the filesystem shipping with the Garbage Thing you've purchased, which given how crappy the code shipping with it generally is (see above). At this point, Matthew hands over to Paul, who goes into detail about the finer points of ripping the software out of the hardware in the first place, all of which is a lot cleverer than I am. Some points:

- Little, if any, of this stuff has any meaningful protection. The firmware isn't encrypted or locked in any way, which is great for doing stuff with your device (yay!) but not so good if it stores any kind of user data (boo!).

- Preventing devices from booting (and thence accessing their storage, preventing you from reading it) can be one of the trickier bits.

- Being careful with voltage is important. Most storage is 3.3V, but finding the odd unit that is 1.8V is an expensive mistake.

- Much of the work is relatively straightforward, but if you aren't careful with your soldering and pin manipulation techniques, you will break a lot of $200 dollar devices.

- The best way to avoid this is, if you're going to be working with a given device a lot, is to completely desolder the storage and install an old-school socket to drop it in (and out of).

The most secure device they found was online Barbie, which actually had a bunch of fairly sane software and behaviours; hence:

- "Barbie is better than you."

- "If you can't do security better than Barbie, just go home. No computer for you."

(Barbie, incidentally, has a PCB that has to be shaved down to fit her waist-to-boob ratio. The comments write themselves...)

Hack the AO: Cyber th***ht lea***ship on the Battlefield

Faz

Overall I thought this was a good talk that could have been a great one - it was Faz's first time, and his nerves showed in him being a bit hurried; the talk sounded a bit less well-thought-out than it actually is.

But doing these things is fucking nerve-wracking. So let me appreciate it as a good talk that deserves thinking about. And I have - some of what follows are direct from Faz's comments and slides, and some of it's "things Faz made me think about." Any stupidity is mine.

Faz gave us a rundown in his background: as well as being a security geek, he's a Signals reservist in the Australian army. This gives him a very interesting, and thematically appropriate, perspective on how infosec is developing.

Flaws in Military Thinking.

Faz started out talking about the flaws in modern military thinking; the rolled out the old saw about generals fighting the last war - and noted that the last "proper" war, in the sense of the kind of battlefield conditions that allowed orthodox large-scale deployments, was... the Korean War. The lessons of the Korean War informed the "blowing things up" strategy, tactics, and logistics of the Australian army. And with the move of the military into cyber, this is troubling for a number of reasons:

- The military is still fundamentally focused on blowing shit up. Yes, the Australian army may (like the New Zealand one) not have the same reputation for being psychopathically careless around weddings, hospitals, or civilians generally than, say, the US. But their briefing material tends to lead down the path of blowing stuff up as the final say in a disagreement, which is how you wind up with drones blowing up civilians who were standing too close to the wrong SIM card.

- The Korean war is a shitty operating model for modern shooting engagements like East Timor, the former Yugoslavia, Syria, Iraqi urban warfare, and so on and so forth. It's even more terrible for "cyber" when we bear in mind that your adversaries may range from actual state-backed hackers trying to damage or destroy critical systems and infrastructure (because hospitals and hydro dams should be secure, but they probably aren't). But they mesh in with civilians doing essentially harmless/nuisance level LOIC attacks, trolls, conventional criminals, infosec researchers, red teams, and many, many other possibilities. Shooting, shelling, or bombing is like razing a city block in response to a kid chucking a rock at a tank.

- Still stuck in a model of doing without understanding, which may be important for many orthodox (Korean war!) battlefield situations, but is terrible for infosec.

- Defence papers/positions often dominated by Defense Industry positions. Which means that "Cyber" will be dominated by the same corrupt nonsense that sees the Australian Navy with boats that don't float, I guess. And, of course, the more disturbing problem that peace is a problem for the defence industry, while eternal war is a win.

Flaws in applying military thinking to the civilian world.

- The military are not at the cutting edge. "The AU military are still using Windows XP in places." Faz noted that while the idea of the military as cutting edge in computing and signals was once true, it hasn't been that way since the 70s.

- They aren't solving the problems you need to solve, unless you too can call down airstrikes.

- The top-down model of obedience is awful.

And yet people are desperate to import "military thinking" into "Cyber" for the private industry. I'd note that my experience of "Team Defence" (those of us not actually working in pen testing/research/whatever) include quite a few people who would desperately love to be spooks or military, so it's perhaps unsurprising they have the kind of groupie approach Faz is concerned about.

Fear and Loathing on your Desk: BadUSB, and what you should do about it

Robert Fisk

Robert's background is electrical engineering; he also has a sideline in protecting Chinese New Zealanders and New Zealand residents from hostile, state-level adversaries, which seems like a pretty challenging hobby. This leads, naturally, into threat models. Which includes our good, useful, and rather dangerous friend, the USB port.

The (security) Problems With USB

Robert walked through the three types of USB attacks:

- Untrusted input. USB is a complex protocol, and many, if not most USB drives are written to a budget of pennies, not dollars, much like the hardware itself. Bugs and shitty behavious therefore abound. And that's just for accidental problems. There are incredibly sophisticated exploits available here - such as, for example, a device which exploits well-known BIOS, EFI, or UEFI exploits to wait for a boot event, attack the BIOS, install a malicious payload on the machine, and then continue booting as though nothing had happened.

- USB hubs are a design feature of USB: you are supposed to be able to have multiple USB devices hanging off a single USB plug. This is by design. Unfortunately it also leads to attack vectors like, say, putting a USB hub and a USB keylogger inside a mouse. Sure, you see the mouse; what you don't see is the HID device logging your keystrokes to the mouse storage, for example.

- Intercepting your data on the wire. This is an interesting one - there's nothing to stop a USB device from hoovering up the data on the wire before it hits your device. That may not be a problem with a mass storage device for a user sophisticated enough to use BitLocker or dm-crypt, but it presents problems otherwise.

USB Condoms

Robert then described what a more secure USB interface might look like:

- Constraining the commands on the wire to a sanitised set the host knows it can cope with solves most of the problems with the first set of attacks.

- Locking down what gets presented to the USB host works here: if you're plugging in a keyboard, filtering out everything except a single HID device (so locking out mass storage or hubs, for example) mitigates these sorts of attacks, at the cost of reduced functionality.

- There aren't any real magic bullets in this space, unfortunately.

So how to go about doing this? Robert has been working on a combined hardware and software stack to do exactly this: the prototype, which is built with off-the-shelf single board computers and free software, consists of a pair of SBCs wired together over an SPI bus. Robert described his toolchain for the software he's built the proxying software; it currently guards against the first two USB attacks (noted above) pretty well, albeit with some caveats: for example, throughput is limited to USB 1-ish transfer rates (although this is improving).

If you're interested, you can build your own for maybe $100.

At the moment he's beta testing the evolution of this into a final, productionised device, one which will be a single, small form-factor USB device about the size of a couple of USB sticks, and will be consumer-friendly(ish). He hopes that will provide USB 2 transfer speed performance, but noted that given the cost and complexity of building high-speed circuts it's unlikely that he'll ever manage USB 3 (which requires complex multi-layer PCBs and near-perfect routing to achieve anything close to the theoretical 5 Gbps).

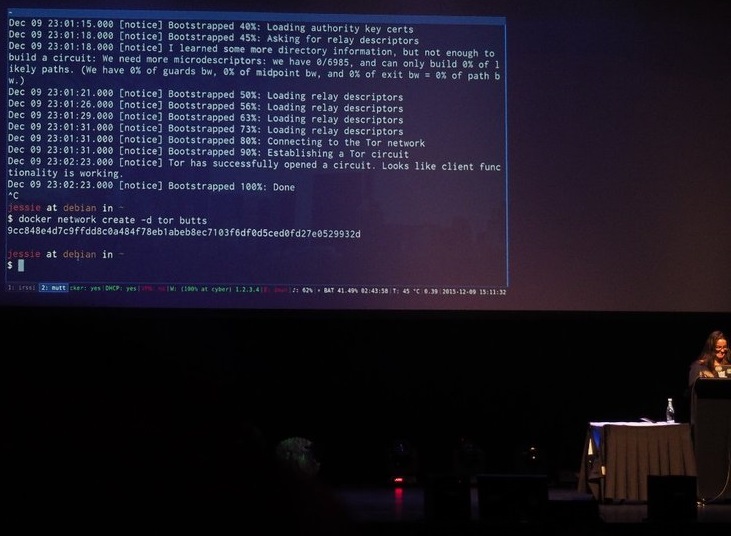

Staying Anonymous Online with Containers

jessfraz

Jess is a core maintainer for Docker. She was, at one point in her talk, quick to note core means she neither knows nor cares about your issues with images, from which I assume she's suffered through having folks bend her ear about that sort of thing...

Jess's talk revolved around how you can use Docker to make it easy to:

- Container ALL THE THINGS.

- Route ALL THE CONTAINTERS.

- Anonymize ALL THE ROUTES.

In particular, she wanted to demo a couple of features in newer versions of Docker ("which no-one will ever use because we add cool features and then they get ignored", she noted, with an air of resignation that would not be out of place coming from Marvin the android): the ability to write network plugins to make it easier to configure complex network setups, and the ability to share networks across multiple containers.

Now, because I didn't have my note-taking apparatus (because technology is awful), I don't have a lot of notes on the detail here. But even if it had been working I may have struggled to hear of the giant, clanking brass balls of a speaker who is brave enough to make their whole frickin' talk a live demo.

Yes, really. Most speakers are happy not to tempt the demo gods by sticking to a five minute quickie at the end of their session, leaving themselves the escape route of blathering for a little longer if things don't work out, but this was solid type-and-talk with full screen terminals in front of One Thousand and Five Hundred People.

My typing goes to shit if a couple of people are watching.

Anyway, the demo gods did have their little jab ("Butts? Uh, I guess I forgot to remove that test network earlier"), but things went pretty smoothly overall: Jess showed off being able to, for example, run up Chrome in a container, routing all its traffic through a tor proxy (running in another container). The next step was chaining proxies together - Chrome to privoxy to tor to the 'tubes. And then an OpenVPN container. And so on and so forth. A lot of magic happened, but it happened with much less drama than you might think.

The Password Hashing Competition

Peter Gutmann

Peter wanted to talk to us about passwords, and encryption, and competitions.

Passwords and Their Genesis

In the beginning, there were single-user punchcard-driven monstrosities, and security was not needed.

Then came time-sharing, and it turned out people will, well, hack. So time-sharing systems introduced passwords. In plain text files. The ability to read other peoples' credentials left passwords less effective than you'd have hoped.

So MULTICS decided that perhaps passwords should be encrypted at rest, and introduced techniques that are still in use today[1] (one-way hashes, many rounds of encryption to make brute-forcing difficult, and so on); so naturally, when Unix was developed, the first thing jettisoned were these lessons, and passwords went back to being in a plain text file. Eventually, the lessons were re-learned the hard way and shadow files and such showed up.

In the mean time, it became clear that, as computers became more and more powerful, that simple encryption algorithms would no longer cut it. Peter detailed the measures and countermeasures (many of which, because software is awful and you shouldn't write your own encryption, were one step forward, two steps back[2]) to keep passwords secure: algorithms became more complex, trying to be more expensive, in terms of CPU and memory footprint, to brute force; using salts to make table-based attacks harder, and so on and so forth.

NIST, the US government organisation that helps define security and encryption standards, selected and published a number of algorithms over the years; since they had the advantage of being designed and reviewed by people who actually know what they were doing, this has generally been helpful. Then, NIST had a new idea.

A NIST Competition

For the most recent iteration of the SHA3 algorithm, NIST decided to run a competition: you could submit your algorithms, NIST's experts would pick a winner, as measured against a set of criteria. This was, in theory, an excellent approach. It generated tremendous interest, but went a bit off the rails, in Peter's opinion, for a number of reasons:

- The winner was controversial, since it was felt it had some significant problems, particularly around performance.

- The winner was tweaked to accomodate the feedback - but this made people even more unhappy, since they felt that if both the criteria and the entry could be tweaked post-facto, the right thing to do would be to review whether other algorithms were actually better fits.

- So NIST backed down and went with the original winner in unmodified form.

- But this meant the original problems returned: Peter characterised the new SHA as being criticised for not offering significant benefits over the exisiting ones.

But Peter, and others, did like the idea.

An Open Competition

And so in 2012 a long list of luminaries (and a "hippie from Auckland") kicked off the Password Hashing Competition. Peter was very enthusiastic about the process overall; as it unfolded over a couple of years, he found that:

- There were many submissions (24), ranging from poorly-formed code-only implementations with no explanation, to thesis-length specifications explaining detailed rationales for design decisions, along with well-implemented code.

- Rather than the traditional academic cryptographic reasearch model, where you present at a conference, and then a year later someone presents problems with your proposed algorithm, and a year later you provide an update at sucessive conferences, all the critiques happened on mailing lists; as such the judging panel's feedback about the strength and weakeness of particular approaches were acted on in weeks, rather than years.

- As such, the selected algorithms (and the close runners up) had heavily evolved from their original approaches, cribbing from one another.

- The open discussions and open competition were therefor tremendously helpful in improving the quality of the whole feild.

- Learning from the NIST problems, the panel were careful to select against their published criteria.

Peter gave an more detailed overview of why the chose the eventual winner, Argon2, but also of a couple of the nearly-there candidates. In particular he praised entries which relied less on simply "making existing techniques harder", something which has tended to unravel fairly quickly in the face of improved hardware (CPUs, multicore, GPGPUs, ASICs, etc), tried mathematically novel techniques. But his highest praise was reserved for the open process and the way entrants used it to improve their work quickly; it's something he'd strongly recommend for future attempts to solve these sorts of problems.

- Well, except on web sites containing your credit card data or details of your attempts to have affairs with bots, I will note. I can only assume Peter spends most of his time looking at system security, because if he considers the state of the art in *ix lamentable I think he might suffer something fatal if he followed web standard practises. ↩︎

- Digital, for example, decided to extend the password length in their Unix flavour to more characters, which was good, and then implemented it as an 8 and 5 character round of encryption, which meant you now how a less secure password, since breaking the 5 characters first let you effectively speed up the attack dramatically. ↩︎